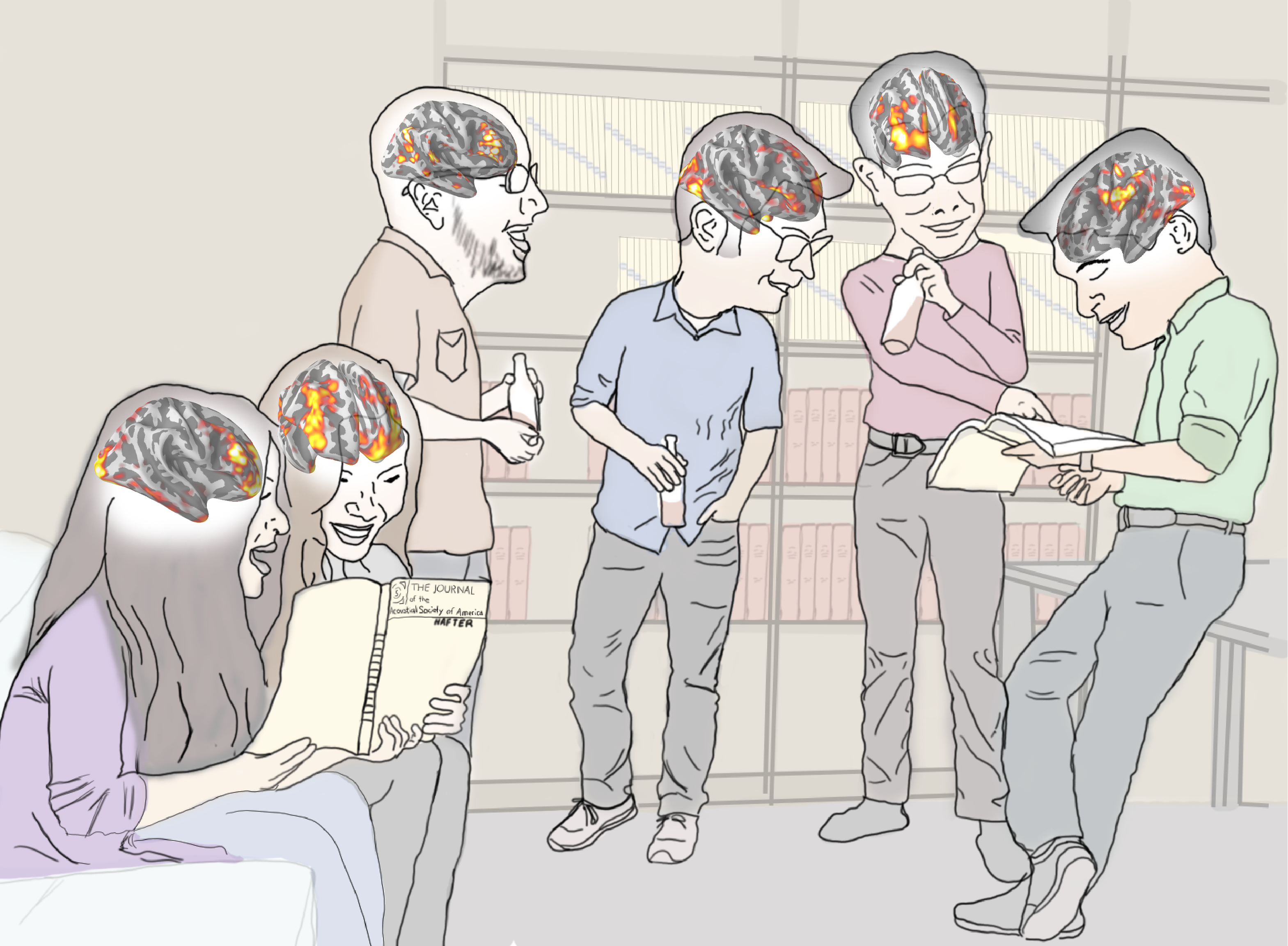

Mapping the cortical network involved in auditory attention

Sound arriving at our ears is a sum of acoustical energy from all the auditory sources in the environment. In order to dynamically follow different conversations in a crowded room, our brain must constantly direct attention to the auditory signal of interest and segregate sound that originated from other uninteresting sources. The fundamental neural circuitry and dynamics involved in this cognitive process, known as auditory scene analysis (ASA), is not well understood. We are currently studying the underlying neural bases of auditory attention in normal-hearing young adults and map its temporal characteristics across different cortical regions in ASA. [Funding agency: NIH-NIDCD]

Audiovisual binding

When you are in a crowded bar, it is easier to understand conversation if you can pair your friend’s face and mouth movement with her voice. Similarly, you can pick out the melody of the first violin in a quartet more easily when you can watch the player’s bow action. Even though what we hear and see take different physical forms and are encoded by different sensory receptor organs, we can effortlessly bind auditory and visual features together to create a coherent percept. How does our brain combine information across sensory modalities? Does it integrate information across modalities and then make a perceptual judgment? Does it bind perceptual features together into a unified crossmodal object? We combine human psychophysics and multimodal neuroimaging approaches (along with our collaborators’ research using animal models) to answer these and other questions about audiovisual binding.

Characterizing central auditory processing dysfunctions

Despite having normal hearing thresholds, some listeners struggle to communicate in everyday settings, especially in the presence of other talkers, background noise, or room reverberation. A portion of these listeners might be diagnosed as having a (central) auditory processing disorder. What is the neurobiological basis for such suprathreshold deficits (i.e., problems processing important features of sound even though it is loud enough to hear)? In our laboratory, we use a battery of psychoacoustical and neurophysiological tests to determine whether these deficits are attributable to imprecise temporal coding in the periphery / brainstem, or to deficiencies in the cortical auditory attentional network. In a collaboration with the UW Autism Center and the Fetal Alcohol Syndrome Diagnostic & Prevention Network, we are studying listeners older than 13 (with or without known neurodevelopmental disorders) to see how they differ in their suprathreshold listening abilities. [Funding agency: NSF-CRCNS]

Cognitive load assessment in complex acoustic environments

Comprehending speech is usually easy for most people, but even those of us with excellent hearing can at times struggle to comprehend speech (e.g., watching a TV interview of someone with an accent -- say with a mix of Cantonese and Australian twang -- while your kids are screaming in the background). Many factors influence how effortful speech processing can be: presence of background noise, how healthy our ears are, past experience with a dialect or accent, and even factors associated with cognitive control (e.g., how often you have to switch attention to get the gist of a conversation). Our laboratory uses both neuroimaging and pupillometry (measuring real-time changes in pupil size) to investigate how listening in different scenarios can incur a behavioral cost (a.k.a., higher cognitive load, increased listening effort). Specifically, we are interested in characterizing the cognitive load associated with speech that has been degraded spectrotemporally (e.g., using vocoder simulations). This is clinically important because it has been hypothesized that making sense of the auditory world (a.k.a., auditory scene analysis and object formation) are less effective in listeners with hearing loss than in normal hearing listeners.

Using neuroscience to design a next-generation Brain-Computer Interface

Harnessing the capability of reading and classifying brainwaves into the myriad of possible human cognitive states (referred to as

brain-states) has been a long-standing engineering challenge. We are currently investigating how to best leverage the latest neuroscience knowledge to transform the current engineering approach in brain-state classification. By systematically assessing how we can capitalize on the similarity in brain function across subjects (a traditional neuroscience approach) and optimally incorporate

a priori information to maximize classification algorithm performance at an individual level (a traditional engineering goal) we will be able to elucidate the benefit of an innovative, integrated neuroengineering approach in improving our abiity to classify human brain-states. [Funding agency: DoD-ONR, UWIN@UW]