ABCs of the ATK Extension

Extension

Extension

ExtensionIntroduction

The Ambisonic Toolkit (ATK) aspires to offer a robust and well documented research grade library of tools for the SuperCollider user.

Two toolsets in one

These tools are organized into two sets.

The first of these is modelled after the hardware1 and software tools offered in the world of classic, aka Gerzonic, First Order Ambisonics (FOA), 2 while the second is framed as a modern Near-Field Controlled Higher Order Ambisonic (NFC-HOA) solution.

Moving between these sets is facilited through parallel tools for both FOA and HOA, and documentation on exchanging Ambisonic signals between these two Ambisonic encoding conventions.

The tools are provided as open building blocks for Ambisonic workflows, signal synthesis and processing. The HOA toolset, in particular, is weighted towards providing resources for implementations and design patterns rather than multiple, closed black box solutions.3

Theory & practice

The ATK is solidly built upon a firm foundation of direct calculation of Ambisonic encoding coefficients. Since the inclusion4 of Special Functions from the Boost library, fast coefficient calucations are available in both sclang and on the sound synthesis server.

In library solutions for a complete Ambisonic workflow are available either directly, or are otherwise documented.5

Learning

An expanding collection of verbose guides and tutorial is provided to facilitate learning Ambisonic and ATK use patterns. Users are invited to help expand this documention through both requesting and submitting guides and tutorials.

The paradigm

The ATK brings together a number of classic and novel tools and transforms for the artist working with Ambisonic surround sound and makes these available to the SuperCollider user. The toolset in intended to be both ergonomic and comprehensive, and is framed so that the user is encouraged to think Ambisonically. By this, it is meant the ATK addresses the holistic problem of creatively controlling a complete soundfield, allowing and encouraging the artist to think beyond the placement of sounds in a sound-space (sound-scene paradigm). Instead the artist is encouraged to attend to the impression and imaging of a soundfield, therefore taking advantage of the native soundfield-kernel paradigm the Ambisonic technique presents.

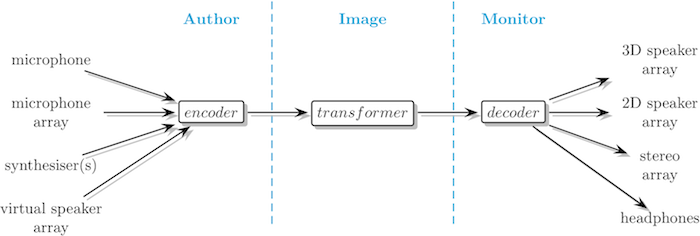

The ATK's production model is illustrated below:

ATK paradigm

Here you'll see that the ATK breaks down the task of working with Ambisonics into three separate elements:

- Author

- Capture or synthesise an Ambisonic soundfield.

- Image

- Spatially filter an Ambisonic soundfield.

- Monitor

- Playback or render an Ambisonic soundfield.

Features

Some notable features of the ATK include:

- Integrated support for classic First Order Ambisonics (FOA) and modern Higher Order Ambisonics (HOA).

- Implements the Near-Field Controlled, aka Near-Field Compensated, form of higher order Ambisonics (NFC-HOA).

- Ambisonic order is merely limited by system speed, channel capacity and numerical precision rather than by design.6

- Control and synthesis of the near-field effect (NFE) of finite distance sources in both FOA and HOA, including radial beamforming.

- Soundfield feature sensing & analysis in instantaneous and time averaged forms.

- Ambisonic coefficients and matrices are available for inspection and manipulation in the interperter.

- Angular domain soundfield decomposition and recomposition.

- Analysis of transformer and decoder matrices.

Installation

Instructions for installing the complete system are found HERE. <-- click link!

If you're reading this document at least one part of the ATK, the atk-sc3 quark has most likely been corectly installed.

Components

The complete ATK library is built of a number of components. These are:

- pseudo-UGens, classes, extension methods and documentation found in the atk-sc3 quark

- ATK UGens found in sc3-plugins

- kernels, matrices & soundfiles found in the ATK repository

If you've successfully completed the steps described above, you should have a working installation of the ATK. Let's review each of these:

- quark

- We can easily inspect the quark and installed quark dependencies via the QuarksGui:

Though we already know the answer, we can also run this test:

If the quark has been installed in

Quarks.folder, we can poke about: - sc3-plugins

- Similarly, if the UGens found in sc3-plugins have been installed in

Platform.userExtensionDir: - kernel, matrices & soundfiles

- And, if the kernels, matrices and soundfiles have been installed in each related user directories, we can inspect:

Suggested extensions

Users can expand the functionality of the ATK by adding additional extensions. The following are suggested.

ADT

The ADT quark offers a convenient interface to the Ambisonic Decoder Toolbox, a collection functions for creating Ambisonic decoders. The interface supplied by the quark has been designed to facilitate use with the ATK.

At the time of this writing, the ADT quark has not yet been included the SuperCollider Quark directory, meaning that the QuarksGui cannot be used to install.

You can install the quark:

You'll then need to complete the installation by installing Ambisonic Decoder Toolbox library and Octave.

Follow the instructions found here.

AmbiVerbSC

Everyone loves reverb! Built with the ATK, the AmbiVerbSC quark provides a native first order Ambisonic tank style reverb algorithm.

As with the ADT quark, the AmbiVerbSC quark has not yet been included the SuperCollider Quark directory.

To install the quark:

Related packages

Support for Ambisonics within SuperCollider has existed in a variety of forms over the years. The principal form of this support has been via unit generators supplied for monophonic source encoding7 and two dimensional, aka pantophonic, decoding.

Over the years, a number of related but different Ambisonic encoding formats have been used. (These formats are discussed in more detail, below.) For now, the thing to keep in mind is that when we use tools from multiple packages, we need to be sure to correctly exchange Ambisonic encoding formats where these differ.

When you've fully completed the installation of the ATK, you'll automagically also have two other packages installed, the SuperCollider builtins8 and those found in JoshUGens. The other two, AmbIEM and SC-HOA require additional steps.

For ease of comparison, the UGens supplied by each package are listed according to the ATK's UGen classification convention. (In this text we'll refer to a particular Ambisonic UGen as a type of Ambisonic UGen.)

Any additional utitilities or functionality supplied by these packages are not discussed here.

If at some point you find a collision with the below packages (or any other), please let us know: How do I report a bug?

SuperCollider

The original author of SuperCollider has provided basic support for First Order Ambisonics (FOA) via the following unit generators:

Many SuperCollider users have been introduced to Ambisonics through experimentation with these UGens.

(Follow this link to review SuperCollider's encoding format.)

JoshUGens

As with the ATK, the JoshUGens collection is also distributed via sc3-plugins, and offers further implementations in first and second order Ambisonic forms.

The following unit generators are supplied:

- Encoder

- BFEncode, BFEncode1, BFEncode2, BFEncodeSter, FMHEncode0, FMHEncode1, FMHEncode2, A2B, UHJ2B, UHJtoB

- Transformer

- BFManipulate, Rotate, Tilt, Tumble, BFFreeVerb, BFGVerb10

- Decoder

- BFDecode, BFDecode1, FMHDecode1, B2Ster, B2A, B2UHJ, BtoUHJ

One of the authors of the JoshUGens package is a significant contributor to the ATK.

(Follow this link to review JoshUGens's encoding format.)

E.g., rather than using JoshUGens B2UHJ (or BtoUHJ), users should employ the ATK's UHJ implementation: FoaDecoderKernel: *newUHJ.

AmbIEM

The AmbIEM library is distributed via the AmbIEM quark, and offers support for first, second and third order Ambisonic tools.

If you've installed this quark you can search for the AmbIEM overview page, or navigate to the library's distributed Help.

AmbIEM supplies the following unit generators:

- Encoder

- PanAmbi1O, PanAmbi2O, PanAmbi3O

- Transformer

- RotateAmbi1O, RotateAmbi2O, RotateAmbi3O

- Decoder

- DecodeAmbi2O, DecodeAmbi3O, BinAmbi3O

(Follow this link to review AmbIEM's encoding format.)

At the time of this writing, the suggested link found in the source code appears to be broken.

Instead, the currently live link to this dependency, full.tar.Z, appears to be here.

3Dj

Developed in collaboration with Barcelona Media, the 3Dj library is distributed via the 3Dj quark.11 Support is offered for first, second and third order Ambisonics.

If you've installed this quark you can search for the 3Dj overview page, or navigate to the library's distributed Help.

3Dj supplies the following unit generators:

- Encoder

- AmbEnc1, AmbEnc2, AmbEnc3, AmbMEnc1, AmbMEnc2, AmbMEnc3, AmbMrEnc1, AmbMrEnc2, AmbMrEnc3, AmbREnc1, AmbREnc2, AmbREnc3, AmbSMEnc1, AmbSMEnc2, AmbSMEnc3, AmbSMrEnc1, AmbSMrEnc2, AmbSMrEnc3, AmbXEnc1, AmbXEnc2, AmbXEnc3

(Follow this link to review 3Dj's encoding format.)

SC-HOA

Like the ATK and JoshUGens, the SC-HOA library, aka HOA for SuperCollider, has components distributed via sc3-plugins. Additionally, for a full installation, the SC-HOA quark, which includes kernel assets, must also be installed. This library, built on ambitools, provides comprehensive support for first through fifth12 order Ambisonics.

If you've installed this quark you can search for the HOA Guide overview page, or navigate to the library's distributed Help. SC-HOA includes tutorials, too, which will be found here if installed.

SC-HOA provides the following13 user facing unit generators:

- Encoder

- HOAEncoder, HOAmbiPanner, HOALibEnc3D, HOAEncLebedev06, HOAEncLebedev26, HOAEncLebedev50, HOAEncEigenMike, HOAConvert

- Transformer

- HOATransRotateAz, HOATransRotateXYZ, HOATransMirror, HOABeamHCard2Hoa, HOABeamDirac2Hoa, HOALibOptim

- Decoder

- HOADec5_0, HOABeamHCard2Mono, HOABinaural, Lebedev50BinauralDecoder, HOADecLebedev06, HOADecLebedev26, HOADecLebedev50, HOAConvert

(Follow this link to review SC-HOA's encoding format.)

Ambisonic formats

For users new to the world of Ambisonics, perhaps one of the most confusing aspects is the use of the term format. This confusion is understandable, given how this word has been used differently over time and in different contexts.

One way this word is used is to describe the Ambisonic encoding format, aka the encoding convention, of an Ambisonic signal. Another use of the word is to describe the spatial domain of an Ambisonic signal.

We'll consider each of these uses in the following two sub-sections.

Encoding formats

Here we'll briefly review the flavors of Ambisonics.

If you haven't already, a great place to start with understanding encoding formats is to review:

Given an Ambisonic signal, there are four things we need to know for the encoding format (or convention) to be completely specified and unambiguous. These are:

- Ambisonic order

- An integer describing the spatial resolution. Specifies the maximum Associated Legendre degree, ℓ, of a given signal or coefficient set. We can think of Ambisonic order as a spatial sampling rate.

- Ambisonic component ordering

- Sorting or ordering convention applied to arrange spherical harmonic encoding coefficients and resulting signal sets. E.g., Furse-Malham (FuMa), Ambisonic Channel Number (ACN), Single Index Designation (SID). See ordering.

- Ambisonic component normalisation

- Spherical harmonic coefficient normalisation convention. E.g., maxN, N3D, SN3D. See normalisation.

- Ambisonic reference radius

- Encoding radius where all modal components are real. We can think of this as the radius at which the associated virtual loudspeakers are found.

The ATK measures this parameter in meters.

The ATK will report these details for the FOA implementation:

The ATK uses the normalisation indicator FuMa as a synonym for MaxN to indicate Furse-Malham normalisation in the form compatable with classic Gerzonic Ambisonic encoding.

And the HOA implementation:

The very first, Ambisonic order, directly indicates the number of channels (or coefficients) and encoded signal will have. Order specifies the spatial resolution of the soundfield.

Here's the math to report the number of channels or coefficients:

The ATK's HOA toolset offers another way of reporting the number of channels, through a method for HoaOrder:

Clearly, more channels means more spatial information, which means a soundfield with more spatial resolution.

________________

For convenience, the ATK uses the keyword format to indicate component ordering and component normalisation, grouped together:

This use of the word format, to specify both ordering and normalisation, is the most common in the era of modern Ambisonics. Another modern practice is to use the word flavors and to speak of flavors of Ambisonics.

So, users often speak of a signal being encoded as ACN-N3D or ACN-SN3D, the latter being known as AmbiX.

We can also look up common encoding convention formats:

________________

The ATK uses another designation, set, to group all these features together. This can refer to an Ambisonic signal or a tool set.

For instance a signal described as HOA5 is specified as:

________________

Let's now consider the toolsets and Ambisonic encoding formats provided by the above related packages (package names link to UGen lists, above):

________________

In reviewing the above, we can note:

- SuperCollider

- Encodes in the ATK's FOA encoding format, aka classic Gerzonic Ambisonics

- JoshUGens

- Encodes first order in the ATK's FOA encoding format, but does not encode in the ATK's HOA encoding format

- AmbIEM

- Does not encode in the ATK's HOA encoding format

- 3Dj

- Nearly encodes in the ATK's HOA encoding format

- SC-HOA

- Nearly encodes in the ATK's HOA encoding format

________________

Exchanging formats

As you would expect, the ATK does facilitate exchanging signals encoded with different conventions, so we can use all these libraries together if we'd like. We just need to take care to translate signals correctly for the given library.

Because the ATK uses the below convention to designate Ambisonic operations, we can consider the conversion of an FOA signal to HOA1 in two different, but equivalent, ways:

- decode FOA to HOA1

- encode HOA1 from FOA

This operation is known as format exchange.

Review the practical examples found here: Ambisonic Format Exchange

Domain formats

In this section we'll discuss Ambisonic spatial domain formats.

We'll start by reviewing the Oxford University Tape Recording Society (OUTRS) tetrahedral recording experiment of 1971, briefly list the various letter named Ambisonic domain formats found in the wild, and finish with the conventions the ATK has adopted to address this part of the Ambisonic puzzle.

________________

We could suggest that Ambisonics began in 1971 with a series of articles entitled "Experimental Tetrahedral Recording". These were prepared by Michael Gerzon for the British magazine Studio Sound, 19 and detailed an experimental recording made by Gerzon and the OUTRS. We can separate the constituent parts of this experiment into: capture and then reconstruct a soundfield, along with another problem to consider: archiving.

Let's open part one of the series:

Figure 1, illustrates the arrangement of the loudspeakers, and the directions in which the cardioid microphones are pointed, the microphone look directions.

In figure 2, if we follow the bottom path of the network from FOUR MIKES through to TETRAHEDRAL SPEAKER LAYOUT, we see the complete pathway for capture to reconstruct.

The four microphones feed the four associated loudspeakers, but are filtered by a block labelled COMMON MODE REDUCTION MATRIX. Gerzon explains:

"In the experiment, hypercardioids were simulated by using a common mode reduction circuit to reduce the common mode (i.e. omnidirectional) component of the four cardioid signals."

Rather than feeding the loudspeakers directly, the microphone polar responses are modified from cardioid to another, variable pattern. The matrix makes each microphone's spatial response more selective. (Look directions stay the same.) Also note, though not yet named so, the "common mode" to be reduced is B-format's W.

Here's a matrix corresponding to setting the variable resistor parameter, VR = 0.183 R, which corresponds to a null in the synthesized microphone response pattern at approximately 125 degrees:20

We can view this matrix as a mixer where each loudspeaker is fed by a mix from all the microphones. For instance, the buss feeding the loudspeaker in the front-left-up position consists of a mix of the named microphones:21

- front-left-up: rescale by +1.09 dB

- back-right-up: rescale by -17.46 dB & invert polarity

- front-right-down: rescale by -17.46 dB & invert polarity

- back-left-down: rescale by -17.46 dB & invert polarity

Here's a photo of OUTRS member Richard Cowderoy with the microphone array.22 And here's a photo of a listening session. (The upper two loudspeakers of the loudspeaker array are visible on the two ladders.)

For archiving, the four microphone signals are just directly recorded.

Regarding the microphones and associated matrixing, in part two Gerzon observes:

"A high degree of coincidence is desirable, as only then is it possible to obtain by a suitable matrixing of the four output signals any possible cardioid or hypercardioid output pointing in any possible direction."

Suggesting the world of Ambisonics to come, Gerzon is observing that given a tetrahedral recording in the form described, alternate, virtual microphones with any (first order) pattern and look direction can be synthesized.

________________

Letter-formats23

A significant amount of confusion regarding Ambisonic domain formats arises from the fact that historically a variety of what we might call letter-formats have arisen over time.24

The list below describes what most practitioners would expect to find a soundfield in the form of:

- A-format

- microphone capsule signals

- B-format

- Ambisonic encoding format

- C-format

- consumer distribution form

- D-format

- loudspeaker feeds

- E-format

- never in wide use, related to C-format

- G-format

- loudspeaker feeds: ITU 5.1

- P-format

- angular domain decomposition

Ideally, the complete, full 3D soundfield is available for retrieval, and each letter is just a different domain encoding.

Referring back to the OUTRS experiment and Gerzon's figure 2, we can only find two complete soundfield forms. These correspond to A-format, and are noted as being recorded as gain and EQ corrected microphone capsule feeds by FOUR-CHANNEL TAPE RECORDERS. The other format we see is D-format, the collection of the actual loudspeaker feeds.

If we're interested in exchanging tetrahedral recordings, however, we immediately have problems. Doh!

In part two, Gerzon states, "The four capsules should certainly lie within a sphere of 5 cm diameter, and preferably less, in order to ensure that phase effects do not upset the matrixing." Beginning with Gerzon's comment on capsule spacing, here's a short list of interrelated factors that must be either specified or compensate for to have a truely exchangeable A-format:

- number of microphone capsules25

- capsule offset(s) from true coincidence

- capsule look directions26

- capsule polar response pattern(s)27

- capsule frequency response(s)

Assuming we have a D-format intended to directly feed loudspeakers given no further signal processing,28 to have an exchangable D-format we need to know:

- number of loudspeakers

- loudspeaker distance(s) from array origin

- loudspeaker look directions29

- capsule polar response pattern(s) used to create loudspeaker feeds30

- loudspeaker frequency response(s)31

The remedy to these high demands for capturing, reconstructing and archiving tetrahedral recordings is B-format.

________________

For the sake of a managable and simplied workflow, the ATK considers (complete) Ambisonic soundfields as existing in only two domain forms.32 Spatial information is represented in terms of:

- angular domain

- angular basis functions

- spherical domain

- spherical basis functions

The angular basis functions have an unambiguous look direction and are are described as having a beam shape.33

When we are encoding, or measuring, a soundfield in either of these domains, we are measureing how much the soundfield is like a particular basis function.

The ATK uses the following convention to associate these domains with letter-formats:

- A-format

- Component or signal set in the angular domain, evenly distributed across the surface of the sphere in a spherical design. Within the context of the Ambisonic Toolkit, any required equalisation or radial filtering is expected to have been applied. In other words, the Ambisonic Toolkit views A-format as a uniform spherical decomposition of the soundfield.

- B-format

- Component or signal set in the spherical domain. In classic first order Ambisonics, aka Gerzonic, B-format indicates a signal encoded where Ambisonic order n = 1, and components are ordered and normalised by the (now named) Furse-Malham and MaxN conventions. B-format is often used to indicate any Ambisonic signal set, without regard to Ambisonic order, component ordering or normalisation.

As discussed earlier and to facilitate workflows, the ATK prefers to refer to signals and tools as being part of a signal or tool set, as this designation completely disambiguates the problem.

Furthur updating the meaning of HOA5:

- HOA5

To be encoded in the spherical domain in the ATK workflow, an Ambisonic signal is completely specified. There are no ambiguities.

In the ATK, B-format is completely specified and means FOA:

- FOA

________________

Spherical decomposition & recomposition

We can characterize the task of moving from the spherical domain to the angular domain as one of uniform spherical decomposition. Moving in the opposite direction is spherical recomposition.34

As you would expect, to be completely disambiguated, any exchangable decomposition must have all set features known and specified.

There are three more aspects that must be known. A beam is a window in the spatial domain. We can understand a single beam as a virtual microphone and/or a virtual loudspeaker. (Think of Gerzon's experiment.)

Reviewing the above, beams of the same shape, but with differing look directions are collected as angular basis functions to describe the soundfield in the angular domain, while spherical harmonics are the collection used to describe the soundfield in the spherical domain.

We're representing the same information, but just in another way. This different way of expression is the difference between these two spatial domains: two different sets of spatial basis functions.

So, to specify a collection of angular basis functions, we need to choose:35

- number of beams

- beam look directions

- beam shape

For HOA, the ATK uses TDesign to help us specify the first two features. Here we will find a spherical t-design given some criteria:

And... we can view!

We can directly associate the minimum number of beams for a given Ambisonic order with a kind of angular spatial sampling rate. For instance, we get an error if we ask for too few beams:

If this happens, we can easily find what designs are available:

The ATK's use pattern for decomposition looks like this:

And, recomposition is re-encoding:

We can check:

You'll find further discission here: Spherical Decomposition.

Library architecture

The help browsing hierarchy is formatted to illustrate the structure of the ATK from a user perspective.

Getting Help

Let's briefly explore the presented categories. Following the links below will navigate the browser to places to find relevent documentation on the listed topic. Some of these links land on splash page overviews which include a brief description of help to be found in that section.

Casually explore the structure of the ATK by taking some time to navigate these links. (Use the browser back button to return to this page.)

- Ambisonic Toolkit

- ATK Platform & Configuration

- various ATK system settings and configurations

- Coefficients & Theory

- foundational ambisonic coefficients &c

- Guides & Tutorials

- documents exploring how to use the ATK

- Licensing

- licensing documents and notices

- Matrix & Kernel

- classes returning matrices and kernels for use with corresponding pseudo-UGens

- UGens

- UGens and pseudo-UGens

- FOA

- user facing FOA UGens and pseudo-UGens

- HOA

- user facing HOA UGens and pseudo-UGens

- Internals

- base classes and other utilitiesNOTE: only for the curious!

- Utilities

- user facing high level utilities

- FOA

To make finding the ATK's help overviews convenient, the titles of these pages all begin with ABCs.

Try this: Search: abcs

Ambisonic UGen types

The ATK uses the keyword type to designate Ambisonic soundfield operations.

UGens are classified:

- Encoder

- receive: some signal

return: spherical domain signal

- Transformer

- receive: spherical domain signal

return: spherical domain signal

- Decoder

- receive: spherical domain signal

return: some signal

In otherwords, an FOA signal is not an HOA1 signal. See the discussion on exchanging encoding formats.

The ATK classifies UGens and related functions returning soundfield operations in terms of expected inputs and outputs. As a result we only have three types.

________________

As an aside, UGens offering Ambisonic digital audio effects are outside the scope of the Ambisonic Toolkit.

E.g., the ATK does not supply Ambisonic reverberators and granulators. Instead, the ATK supplies the tools and infrastructure necessary to design and build Ambisonic reverberators and granulators. See, for instance AmbiVerbSC.

Matrix & Kernel operations

As we've seen above, for a complete install the ATK requires the successful installation of all components, which include matrix and kernel assests.

In the abstract mathy sense, Ambisonic soundfield operations can be described as Ambisonic soundfield kernel convolutions. Sounds difficult!

It also sounds like the implementation of these operations will require convolution. If you've been poking around the UGen internals, you'll have spotted: AtkKernelConv. This is the little workhorse handling any required convolution.

The observant reader will also have noticed another UGen found in this location: AtkMatrixMix.36

________________

Thankfully, Ambisonic operations can be simplified given two conditions:

- the operation does not require complex coefficients37

- the operation is not frequency dependent

If these two are met, the operation can be simplied as multiply and add, or matrix mixing. In other words, the operation can be represented as a matrix of real numbers.

This makes life much easier!38

If an ATK Ambisonic operation is implemented as a kernel operation, we can expect this:

- may require complex coefficients

- may be frequency dependent

- will require more CPU resources

A related implication:

- expect a kernel operation to return a more complex sounding outcome than a matrix operation

For example, FoaEncoderKernel: *newSuper, the ATK's Ambisonic Super Stereo kernel encoder is usually preferred over FoaEncoderMatrix: *newStereo, the much more simple matrix encoder.

________________

Aside from structuring the architecture, the ATK also uses the matrix and kernel keywords to:

- designate Ambisonic UGens and associated classes

- answer operation assets, i.e., the matrix or kernel

- answer the operation

Here are a few examples:

Kinds of operations

The keyword kind is used to identify individual named operations, which is where we find the problem specific elements of the ATK.

As an example, let's review a specific class: HoaMatrixDecoder. We can return a list of implemented HOA matrix decoders, listing the kinds of decoders this class will design:

The class methods listed here are HOA matrix decoder design methods. When we instantiate an instance with one of these methods, we design a matrix to be used for decoding.

Given an instance, we see that kind returns the name of the design method used:

Or, this and a bit more information, but in natural language:

Of course, if we'd like to review the kinds of design methods available, we can also just visit the help page: HoaMatrixDecoder.

Static & dynamic operations

In finishing up our review of the structure of the ATK, we'll note that numerous soundfield operations are available in both static and dynamic forms. This is a direct result of SuperCollider's implementation as a Music V language, and the requirement to provide direct access to operation coefficients for inspection.

Let's review a specific case: HOA beam decoding. A beam decoder, or beamformer, extracts a single beam from a soundfield. This soundfield operation is equivalant to sampling a soundfield with a single virtual microphone and returning the result.

If we browse to review the UGens for HOA decoding, we'll see two decoders listed:

- HoaDecodeDirection

- Higher Order Ambisonic (HOA) beam decoder

- HoaDecodeMatrix

- Matrix renderer from the Ambisonic Toolkit (ATK)

The first of these, HoaDecodeDirection, is the ATK's dynamic continuous rate HOA beamformer :

Reviewing the argument list, theta, phi and radius may all be varied continuously.

Turning to HoaDecodeMatrix, we see:

The matrix required as an argument is not continuously variable. We design the matrix once with HoaMatrixDecoder:

and then pass it to HoaDecodeMatrix.

Putting this together, the equivalent beamforming use pattern looks like this:

and differs from the dynamic beamformer, HoaDecodeDirection, in that theta and phi are static and fixed at initial design time, and we're missing radius.

________________

With a little bit of effort, we could construct a network that would allow us to modulate theta and phi. And we could regain the radial functionality, too.39 However, given the ATK already supplies a dynamic HOA beamer, there's no point in doing so.

Well then, what is the point of working with HoaMatrix subclass instances?

In many cases, we don't actually want our matrix to be changing. When decoding for a real loudspeaker array we'll design the decoder just once.40 Another advantage is that we have access to quite a bit of further details for inspection:

In other words, the static matrix and kernel soundfield operation implementations offer an open framework rather than the closed UGen blackboxes found with most composer facing Ambisonic libraries.

Toolset namespaces

As you'll have observed, the ATK uses a namespace convention to help navigate the component library classes. ScIDE's autocompletion is able help us find what we're looking for when we type in the code editor.

Create a new document, and type each of the following, observing the choices offered by the autocompletion menu:

Rather than navigating the Help browsing structure, this is the way experienced users often find classes of interest.

Typing Foa quickly brings up classes in the FOA toolset whle Hoa returns parallel HOA classes. The Atk namespace organizes library level environment settings and base classes that serve both Foa and Hoa namespaces.

Foa vs Hoa: dynamic UGens

The FOA toolset includes a pseudo-UGen wrapper, FoaTransform, which is intended to offer an interface for dynamic transform operations, and parallels the interface for static transform operations, FoaXform.

In the development of the HOA toolset, we found FoaTransform to be rarely used. As a result, there is no HoaTransform. Instead, UGens are accessed individually, by name:

Foa vs Hoa: static UGens

The FOA toolset's static UGens for encoding and decoding are:

These receive both FOA matrix and kernel instances.

The corresponding HOA UGens are:41

As you would expect from the names, these only receive HOA matrix instances.42

Foa vs Hoa: matrix classes

The naming pattern for equivalent matrix designers is swapped:

- SetTypeOperation for Foa

- SetOperationType for Hoa

For example:

This difference exists to more easily facilite the design of matrices for HOA. When we're designing an HOA matrix, we can think in natural language word order and the IDE will quickly answer.

What next?

Try:

From the documentation for ambitools (link: TBD):

"In the current implementation, these [encoding] filters are stabilized with near field compensation filter at radius r_spk = 1 m."

The value listed as the default speaker_radius for the UGen HOAEncoder is 1.07 meters, however. (If the SC-HOA quark is installed installed, see Help document. Or, source code.)

In a personal communication to Joseph Anderson (cc: Florian Grond), dated Tuesday, 1 December 2020, Pierre Lecomte writes:

"I confirm that the near field and near field compensation filters are implemented in ambitools. The default near field compensation distance was set to r_spk=1.07 m, as it was the radius of the Lebedev spherical loudspeaker array I've built during my PhD."

- No. 8, pp 396-398 (August 1971): https://worldradiohistory.com/Archive-All-Audio/Archive-Studio-Sound/70s/Studio-Sound-1971-08.pdf

- No. 9, pp 472, 473 & 475 (September 1971): https://worldradiohistory.com/Archive-All-Audio/Archive-Studio-Sound/70s/Studio-Sound-1971-09.pdf

- No. 10, pp 510, 511, 513 & 515 (October 1971): https://worldradiohistory.com/Archive-All-Audio/Archive-Studio-Sound/70s/Studio-Sound-1971-10.pdf

- front-left-up: Lf (left-front-ceiling)

- back-right-up: Rr (rear-right-ceiling)

- front-right-down: Rf (right-front-floor)

- back-left-down: Lr (left-rear-floor)

link::Tutorials/ABCs-of-the-ATK::