Neurofutures2016, a conference hosted by the Allen Institute for Brain Science, along with the University of Washington, Oregon Health & Science University, and University of British Columbia, explores new innovations at the interface of neuroscience, medicine, and neurotechnology. At the June 19th public lecture, a former UW post-doc, now leading experimental neuroscientist, talks about how she uses optogenetics to probe decision-making in the brain.

In an unmissable keynote speech at Neurofutures2016, Dr. Anne Churchland of Cold Spring Harbor Laboratory gave us a glimpse into how her neuroscience work has developed since her time as a postdoctoral fellow in the UW Department of Physiology and Biophysics. Introduced by the Allen Institute's Executive Director Jane Roskams as a "leading light in neuroscience," Churchland spoke about her investigation into how the brain integrates different pieces of sensory information to guide decisions.

Churchland's goal is to surmount one of the highest hills in neuroscience—building a theoretical bridge from our solid understanding of simple motor reflexes to complex, flexible behaviors such as decision making. She thinks the best approach to begin to make that link is to study multisensory integration, the innate ability of the animal brain to combine different pieces of contextual information in the environment, such as sound, visual, and emotional stimuli, to arrive at a strategic behavior.

The primate brain, and the rodent brain as Churchland showed to everyone's surprise, integrates auditory and visual information in a "statistically optimal way," or the most efficient and unbiased way possible, by rapidly estimating the reliability of the cues available at the moment and combines them, relying more on higher quality cues than lower quality cues. For example, we pay closer attention to visual cues from a person's face when we can't quite hear amid background noise.

Cold Spring Harbor Lab

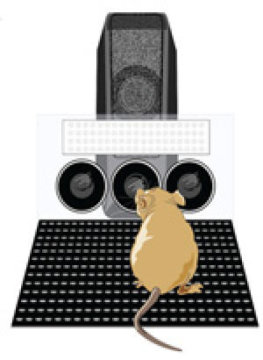

At Neurofutures2016, she explained her experimental paradigm. In her lab set up, a rodent plays in a "behavior box." The animal is presented with sensory stimulation in the form of visual flashes and/or auditory clicks, and must judge whether the frequency is high or low. Earning a reward if they indicate the correct answer by pressing their nose into one of two ports, they quickly learn the task. She finds that, to the same extent as observed in primates, rodents perform better when they get both the auditory and the visual stimuli together (multisensory information), rather than just one type of cue.

Churchland detailed her use of a powerful technology called optogenetics to identify the neural circuits involved in multisensory integration and, as a result, revise unfinished theoretical frameworks in computational neuroscience used to model how information travels in the brain and results in behavior. "Better models would help scientists from around the world to better communicate their findings to each other and make meaningful discoveries. More than ever, we need theoretical scientists and experimentalists to come together to work as a team to interpret the large data sets that are unfolding."

Entering the scene in 2005, optogenetics has given researchers the tools to engineer particular neurons in an animal's brain so that their activity can be switched on or off with flashes of laser light, allowing the role of neurons in a behavior circuit to be investigated, in real time. So far, Churchland has used optogenetics to disrupt the activity of neurons in the mouse brain's posterior parietal cortex (PCC) at the moment when the mouse needs to make the crucial decision of what button to push. In preliminary experiments, she has gathered evidence that disrupting the PCC is severely detrimental to the animal's performance on trials involving visual information, but not so much auditory information, suggesting that the PCC is specifically involved in using visual information to inform behaviors.

"The right theoretical frameworks for multisensory integration are still being developed," said Churchland.

This detail is poised to change the widely accepted notions about multisensory integration in the brain. "We used to think that the PCC receives both auditory and visual input in the same way. But now, we can revise this model. These experiments suggest that this area has a big role in processing visual input, possibly helping the animal to discriminate separate sensory events. We are also finding evidence that the actual integration of arriving sensory stimuli happens elsewhere, probably in the frontal lobe," she said.

In the Q&A session, an audience member posed an interesting question about the implications of Churchland's work: "Your talk made me think about how we have cars that beep when we are backing up to indicate how close the car is to an object," he said. "In that situation, the driver is combining visual information from the mirror and the sounds of the beeps to make optimal decisions about driving. Do you ever realize that your ideas are already happening in the world?"

Churchland responded that yes, she thinks a lot about the decisions that people make on the fly when operating a vehicle. "I'd like to understand better, at level of an individual decision, how recent experience and memory affects our decision-making behavior. For example, if you had performed poorly on the activity the last few times, you might make decisions in a biased way. It would be very useful in designing cars if we had a deeper understanding of how our ability to integrate multisensory signals depends on our past experience, as well as the currently available sensory information—perhaps there are certain states where you are more receptive and less receptive to certain sensory information."

—Genevieve Wanucha